![]() Why

What

How

Roadmap

Tools

FAQ

Join!

Why

What

How

Roadmap

Tools

FAQ

Join!

- Anim·oid

- ( lifelike )

Engineering a truley intelligent, self-aware android robot, using state-of-the-art scientific knowledge and machine learning techniques, one piece at at time.

We aim to engineer a truly intelligent android robot by bringing together Neuroscientists, Computer Scientists, Roboticists, Engineers, Machine Learning experts and Hobbyists to synthesize an integrated intelligence one piece at a time, drawing from the cutting edge of scientific knowledge about brains and learning systems.

We’re actively seeking passionate hobbyists with professional experience in, or mastery of, Neuroscience, Machine Learning, Robotics, Computer Science or related relevant disciplines to join our collaboration. If you’re interested and fit the bill please join us!

Check our meetup.com page for the latest news and events.

Why

Creating an intelligence with human-like competence is a first step toward a broadly applicable theory and understanding of intelligence generally. The advantages of achieving AGI (Artificial General Intelligence) are many and far-reaching (see sidebar) and it would stand as a historic scientific and technological turning point.

Our hypothesis is that the scientific knowledge and engineering techniques permitting the construction of truly intelligent machines exists now (see FAQ). Hence, the obvious question is: why hasn’t it been done?

There are, in fact, many academic and commercial enterprises with efforts underway (and possibly more behind closed-doors). The task is difficult and requires a sustained and focused effort—attributes that don’t align well with current academic and commercial environments. Consequently, recent history is littered with stalled, abandoned and under-resourced academic and commercial efforts providing only incremental progress.

Academic research projects are typically constrained by funding cycles of one to three years and the brains trust of such projects is often graduate students and post-doctoral researchers with limited tenure. In addition, the contemporary highly competitive scientific funding and publishing environment significantly curtails inter-institution cooperation and public sharing of tools and software. The major products of most academic projects are journal and conference publications (with limited reproducibility of results due to competitiveness), rather than sustainable efforts and publicly available tools, source code and designs.

With some notable recent exceptions (e.g. the DARPA SyNAPSE project and the EU Human Brain Project Simulation Platform), there has been little political will for funding developments toward the creation of truly intelligent or self conscious machines. Partly this is due to a lack of education of the general public about the discoveries of modern Neuroscience, but also because of the predominant Cartesian Dualist ideology that pervades Western culture1 resulting in widespread belief that it is inherently impossible for machines to have emotions or be conscious. For a survey of artificial brain projects (most not explicitly aiming for AGI or conscious machines), see2,3

Companies face a different set of hurdles when pursuing general machine intelligence, but the results have been similar. Most companies are too small for internal research teams to justify significant resources over long durations dedicated to projects that don’t contribute to the bottom line in the short to medium term. Conversely, large companies with sufficient resources are often beholden to investors and shareholders, to which such efforts are a tough-sell. Consequently, researchers and engineers often have to be product focused while hoping their ideas are generalizable and transferable.

Hence, a community of scientists, engineers, hobbyists and makers with a shared vision and the passion to vigorously pursue it, not constrained by commercial pressures, funding cycles or expert personnel turnover, is ideal for achieving the creation of a truly intelligent conscious machine.

What

We will engineer a situated humanoid robot. The overarching aspects of the architecture will be end-to-end reinforcement learning using deep learning techniques informed by neuroscience, multiple interacting ‘maps’ including sophisticated bodily maps supporting self-awareness and consciousness, Panksepp-style motivations and emotions contributing to reward shaping, and embodiment. The robot will also be continuously adaptable and utilize episodic and autobiographical memory.

While an exhaustive list of the detailed required characteristics are outside the scope of this description, several critical characteristics that must be engineered into a machine for it to be intelligent are listed below.

Embodiment

While Deep Belief Networks can perform impressive feats of image recognition—such as recognizing a wide range of every-day objects in cluttered scenes4, they don’t really understand what they’re ‘seeing’ in the sense that humans do5,6. We relate the objects we see in a multitude of ways, including both to other sense modalities and to our experience with acting on or with the objects, prior and expected rewards surrounding what actions the objects afford7, drives and the emotions they elicit.

These are all aspects of understanding that can be engineered into a machine, but they can only exist in the context of a situated embodied acting agent with drives, motivations and a sophisticated awareness of its body and how it relates to the environment in which such objects are embedded.

Development of intelligence in the context of a situated embodied agent allows us to ensure we’re focusing on information processing, not data processing, as is often the case in applications of machine learning. Here we mean information in the sense of semantic understanding, not to be confused (critically) with the usage associated with Shannon’s information theory which relates to entropy (see side bar).

Motivation

In order for an agent to act at all it requires motivation to act. Goals emerge as a side-effect of solving broader overarching goals and ultimately from generalized motivations or drives and form a hierarchy of subgoals11. Reinforcement Learning has seen success in numerous domains, such as robotics12, autonomous flight13, gaming playing14 and financial trading15. It requires maximizing a reward function that represents the agent’s optimal behaviour, but it is a significant challenge to specify such a function and doing so has become known as the curse of goal specification. In particular, if rewards are significantly delayed from the behaviours partly responsible for a desired outcome, it can be difficult to know which past behaviours to reward - a problem known as the credit assignment problem16. One solution to this is to award intermediate rewards, an approach known as reward shaping17. Neuroscience research supports the existence of at least seven basic emotional systems in mammals - SEEKING, RAGE, FEAR, LUST, CARE, GRIEF, and PLAY - to use Panksepp’s terminology18,19, which are intrinsically rewarding and consequently constitute reward shaping. While not all of these emotional drives are relevant for an intelligent robot, most can serve to provide overall motivation in an embodied context.

Self Consciousness

Processing the flood of sensory information arriving at a brain, natural or synthetic, is computationally very costly. To have any chance of extracting higher-level invariant patterns from the voluminous sensory data stream, using the available computational resources, implies ignoring data20. Consequently, among the actions an agent must choose between at any given time, is added the task of choosing which sensory information to attend to (and by implication, which to ignore) with which computational resources. These mechanisms form the basis of attention, which is also the gateway to memory and awareness, which is distinct from attention21. In order to achieve human-level performance on numerous tasks requires not only awareness of aspects of the external world, but also of the agent’s internal states. This includes both an awareness of the state of the agent’s body, emotional states and computational states related to task execution and how those relate to the external world22,23. Distinguishing its own sensorimotor activities from extrinsically caused sensory changes is critical for differentiating self from other and hence having a self to which to attribute intentional behaviour and in turn model intentionality of other agents (e.g. people). In short, a self-awareness of itself as an intentional agent. This is a necessary, and perhaps sufficient, condition for self consciousness24,25,26.

Adaptability

Biological intelligences continuously adapt their behaviour, both in response changing conditions and also in order to improve performance. Adaptation spans a range of characteristic time scales, from fast adaptation of primary sensory processing to slow adaptation of high-level behaviour. For example, the dizziness people experience after spinning is a result of the short-term adaptation of visual motion processing to the visual optical flow sensed while spinning27. The longer-term modification of behaviour, such as when learning a complex skill such as bicycle riding or playing a musical instrument, is adaptation a the systems level and is less sensitive to short timescale transients. The various concrete mechanisms of Learning in biological systems evolved in response to the advantages of adaptive behaviour via the utilization of memory. Any intelligent machine will require a similar array of mechanisms for continuous adaptation across several characteristic time scales.

While many applications of machine learning employ a distinct training phase, in order to achieve continuously adaptability, all the networks comprising the agent will have their parameters updated during normal performance, though the learning rate many be reduced over time as the agent’s mind ‘matures’. In addition to such continuous adaptation of the various sensory-motor and other maps, the agent will also employ an explicit autobiographical episodic memory. This will require self-awareness in order to distinguish perceived events happening to itself from those happening to external artifacts or agents. Such memories will not only store the sensori-motor details of events, but also where they occurred, according to spatial and place maps analogous to those formed in the entorhinal-hippocampal structures and via mechanisms such as place cells and grid cells28.

How

Development of all aspects of Animoid will be undertaken by a community comprised of a loosely bound group of scientists, engineers and hobbyists who share a passion for intelligent machines and the drive to see synthetic intelligence brought to fruition. In addition to a core group of founders guiding the effort, the expertise of a larger group of expert advisers in Neuroscience, Machine Learning and Robotics will be indispensable. In addition, we hope to build a larger community of interested makers/enthusiasts/hobbyists who will also work on whichever aspects of the project they find most interesting and to continuously incorporate the best of these components and ideas into a unified whole.

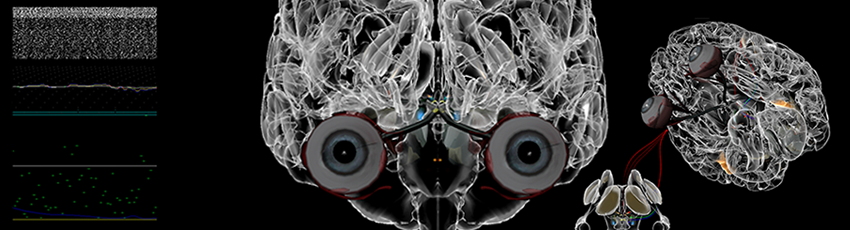

Architecture

The human brain will serve as a guiding structure, though the goal will not be to explicitly duplicate its every function in detail. By going through a catalog of known anatomical brain structures and examining the leading theories as to the likely function of each, and how they relate to the whole, it will be possible to decide which functions are needed and which technologies are most appropriate for implementation of each. Where alternative theories and technological approaches exist, multiple implementations can be made and evaluated against each other within the context of the whole operating agent.

Individual components may employ different techniques including various types of Artificial Neural Networks (ANN) and Spiking Networks of various levels of biological fidelity, depending on performance, computational tractability and facility for integration into the whole.

While individuals may wish to focus on specific aspects of the agent, such as particular sensory modalities, motor control, episodic memory etc., a loose overall architecture that ties all such components into a whole unified by end-to-end reinforcement learning will be established. While the exact terms that comprise the reward function will evolve over time, they will initially be informed by Panksepp’s motivations.

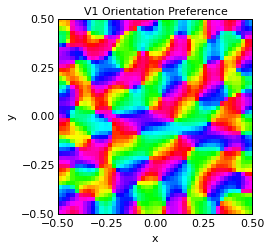

One common theme seen throughout the brain is the interaction of multiple topographically organized ‘maps’. The simplest maps to understand are primary sensory maps, found mainly in the thalamus and connected cortex, such as the LGN and primary visual cortex area V1—which is a two dimensional array, or map, of the visual scene registered retinotopically. Similar maps exist for audition, somatosensation (touch) mapping the body surface, motor maps in the motor cortex and so on. These maps are registered, in turn, to ‘higher level’ multi-sensory maps, such as three dimensional maps of objects around the agent in eye-centered, head-centered and body-centered coordinate frames found in the posterior parietal cortex30.

Simulation

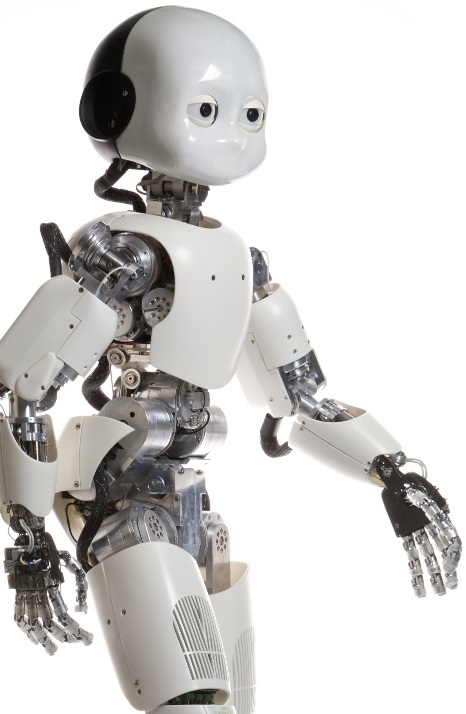

We anticipate that, initially, work will focus on the overall architecture and that specific components will be developed in the context of a 3D simulated environment and robot, employing tools such as SimSpark or Player. Embodiment is a critical requirement and consequently, in subsequent phases, robots will need to be built or acquired. There are a number of humanoid robots available and it is a growing market—for example the Nao and Pepper robots from Aldebaran or the Open Source hardware iCub robot created by the EU RobotCub project and Robotis’ OP2 Open Platform. Until then, it would be preferable if a robotic platform for which a realistic simulation environment is available, be employed.

Computation

Neural Networks are computationally expensive and the size and depth of the networks required to fully realize all the components of a complete intelligence are considerable. The use of conventional PC clusters has meant that such large scale simulations have thus far been restricted to large companies and government laboratories with supercomputing resources. However, recently, neural network toolkits have embraced utilizing GPUs for higher-performance processing, such as Caffe with nVidia’s cuDNN and Theano. This brings simulation of small to moderate sized networks within reach with modest resources—particularly in combination with cloud-based GPU compute services like Amazon’s EC2. While using GPUs will enable initial smaller scale development, ultimately scaling up to larger networks will require hardware acceleration. One example demonstrating the enormous speedup and power reduction afforded by dedicated neural hardware is IBM’s TrueNorth chip, which provides 1 Million spiking neurons and 256 Million synapses on a single chip that consumes only 70mW31. Another, focused on ANN implementations, is the NeuFlow dataflow architecture being commercialized by Terradeep.

Robot Embodiment

Once a rudimentary autonomous android has been implemented in simulation and enough interested participants have been attracted, we hope to pool resources to acquire or build a humanoid robot that can be shared between the core local team, perhaps housed in a local maker-space. Modest fund-raising may be achieved via one or more of the various community crowdfunding services, such as Kickstarter, Indiegogo, PetriDish or Experiment.

-

Descartes' Error: Emotion, Reason, and the Human Brain

, Anthony Damasio, Penguin Books, 2005 (ISBN 978-0143036227). ↩

-

A world survey of artificial brain projects, Part I: Large-scale brain simulations, Hugo de Garisa, Chen Shuoa, Ben Goertzela, Lian Ruiting, Neurocomputing, Volume 74, Issues 1–3, December 2010, Pages 3–29. ↩

-

World Survey of Artificial Brains, Part II: Biologically Inspired Cognitive Architectures, B. Goertzel, R. Lian, I. Arel, H. de Garis, S. Chen, Neurocomputing, September, 2010. ↩

-

Going Deeper with Convolutions, Christian Szegedy, Wei Liu, Yangqing Jia, Pierre Sermanet, Scott Reed, Dragomir Anguelov, Dumitru Erhan, Vincent Vanhoucke, Andrew Rabinovich, Computing Research Repository, abs/1409.4842, 2014 (http://arxiv.org/abs/1409.4842). ↩

-

Bootstrapping Grounded Word Semantics, Steels, L. and Kaplan, F., in Briscoe, T. (ed.), “Linguistic evolution through language acquisition: formal and computational models”, Cambridge University Press, 1999 ↩

-

Grounded Symbolic Communication between Heterogeneous Cooperating Robots, David Jung and Alexander Zelinsky, Autonomous Robots, special issue on Heterogeneous Multi-robot Systems, Kluwer Academic Publishers, Balch, Tucker and Parker, Lynne E. (eds.), vol 8, no 3, pp269-292, June 2000 (http://dx.doi.org/10.1023/A:1008929609573). ↩

-

The Theory of Affordances, James J. Gibson, in Perceiving, Acting, and Knowing, edited by Robert Shaw and John Bransford, 1977 (ISBN 0-470-99014-7). ↩

-

Information Theory And Evolution (2nd Edition)

, 2nd Ed., John Scales Avery, 2012 (ISBN 978-9814401234). ↩

-

Whatever happened to information theory in psychology? Luce, R. Duncan, Review of General Psychology, Vol 7(2), Jun 2003, 183-188 (http://dx.doi.org/10.1037/1089-2680.7.2.183) ↩

-

Claude Shannon, Devlin, K. Focus: The Newsletter of the Mathematical Association of America, Vol 21(5), 20–21, 1916–2001, May 2001. ↩

-

The Evolution of Cognitive Control, Dietrich Stout, Topics in Cognitive Science 2010, Volume: 2, Issue: 4, Pages: 614-630 (http://dx.doi.org/10.1111/j.1756-8765.2009.01078.x). ↩

-

Hierarchical apprenticeship learning with application to quadruped locomotion, Kolter, J. Z., Abbeel, P., and Ng, A. Y., Advances in Neural Information Processing Systems (NIPS) 2007 [PDF]. ↩

-

Autonomous Helicopter Aerobatics through Apprenticeship Learning, Pieter Abbeel, Adam Coates, Andrew Y. Ng, International Journal of Robotics Research, Volume 29 Issue 13, November 2010, Pages 1608-1639 (http://dx.doi.org/10.1177/0278364910371999). ↩

-

Playing Atari with Deep Reinforcement Learning, Volodymyr Mnih, Koray Kavukcuoglu, David Silver, Alex Graves, Ioannis Antonoglou, Daan Wierstra, Martin Riedmiller, Neural Information Processing Systems (NIPS), Deep Learning Workshop, 2013 (http://arxiv.org/abs/1312.5602v1). ↩

-

Q-Learning-Based Financial Trading Systems with Applications, Corazza, Marco and Bertoluzzo, Francesco, University Ca’ Foscari of Venice, Dept. of Economics Working Paper Series No. 15/WP/2014. (http://dx.doi.org/10.2139/ssrn.2507826) ↩

-

Steps toward artificial intelligence, Minsky, M. L., In E. A. Feigenbaum & J. Feldman (Eds.), Computers And Thought (pp. 406-450). New York, NY: McGraw-Hill, 1963. ↩

-

Theory and Application of Reward Shaping in Reinforcement Learning, Laud, A. D., PhD thesis, University of Illinois at Urbana-Champaign 2004. ↩

-

Affective Neuroscience: The Foundations of Human and Animal Emotions (Series in Affective Science)

, Jaak Panksepp, 2004 (ISBN 978-0195178050). ↩

-

The Archaeology of Mind: Neuroevolutionary Origins of Human Emotions (Norton Series on Interpersonal Neurobiology)

, Jaak Panksepp, 2012 (ISBN 978-0393705317). ↩

-

Cognitive Psychology and its Implications

, J. R. Anderson, 2010 (ISBN 978-1429219488). ↩

-

Attention and consciousness: Related yet different, C. Koch and N. Tsuchiya, Trends in Cognitive Sciences, vol. 16. pp. 103–105, 2012 (http://dx.doi.org/10.1016/j.tics.2011.11.012). ↩

-

Affective Neuroscience: The Foundations of Human and Animal Emotions (Series in Affective Science)

, Antonio Damasio, 2012 (ISBN 978-0307474957). ↩

-

The Ego Tunnel: The Science of the Mind and the Myth of the Self

, Thomas Metzinger, 2010 (ISBN 978-0465020690). ↩

-

Creation of a Conscious Robot: Mirror Image Cognition and Self-Awareness, Junichi Takeno, Pan Stanford Publishing, 2012 (ISBN 978-9814364492). ↩

-

Why Red Doesn’t Sound Like a Bell: Understanding the feel of consciousness

, J. Kevin O’Regan, Oxford University Press, 2011 (ISBN 978-0199775224). ↩

-

Consciousness and Robot Sentience (Series on Machine Consciousness)

, Pentti O Haikonen, Series on Machine Consciousness (Book 2), World Scientific Publishing Company, 2012 (ISBN 978-9814407151). ↩

-

The Neurology of Eye Movements

, R. John Leigh, Contemporary Neurology Series (Book 70), Oxford University Press; 4th ed, 2006 (ISBN 978-0195300901). ↩

-

Place cells, grid cells, and the brain’s spatial representation system, Moser EI, Kropff E, Moser MB, Annu Rev Neurosci.;31:69-89, 2008 (http://dx.doi.org/10.1146/annurev.neuro.31.061307.090723). ↩

-

Mechanisms for Stable, Robust, and Adaptive Development of Orientation Maps in the Primary Visual Cortex, Jean-Luc R. Stevens, Judith S. Law, Ján Antolík1 and James A. Bednar, The Journal of Neuroscience, 33(40): 15747-15766, 2 October 2013 (http://dx.doi.org/10.1523/JNEUROSCI.1037-13.2013). ↩

-

Multisensory maps in parietal cortex, Sereno, M.I. & Huang, R.S., Current Opinion in Neurobiology 24, 39-46, 2014 (http://dx.doi.org/10.1016/j.conb.2013.08.014). ↩

-

A million spiking-neuron integrated circuit with a scalable communication network and interface, Merolla, P. A.; Arthur, J. V.; Alvarez-Icaza, R.; Cassidy, A. S.; Sawada, J.; Akopyan, F.; Jackson, B. L.; Imam, N.; Guo, C.; Nakamura, Y.; Brezzo, B.; Vo, I.; Esser, S. K.; Appuswamy, R.; Taba, B.; Amir, A.; Flickner, M. D.; Risk, W. P.; Manohar, R.; Modha, D. S., Science 345 (6197): 668. 2014. (http://dx.doi.org/10.1126/science.1254642). ↩